|

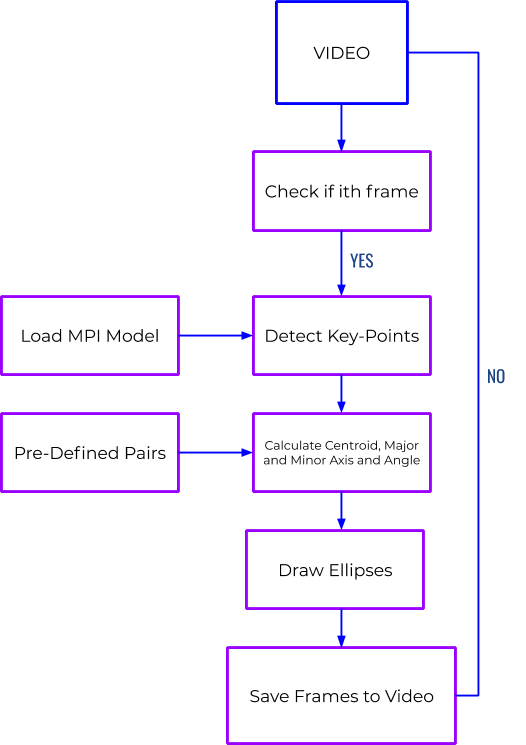

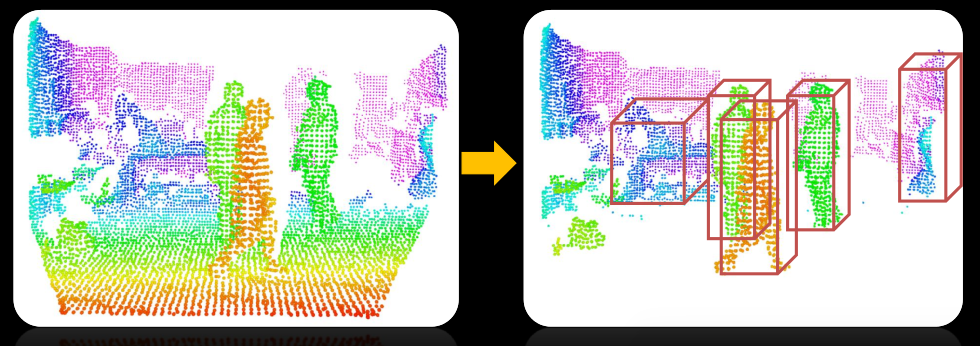

I suspect the issue is that the gradients produced by each positive image do not produce very consistent results when saved into the Histogram. So far I have done some in depth research on using HOG descriptors to solve this problem, but I am finding that the variety of poses produced by forearms in my positives training set is producing very low detection scores in actual images. It is possible to have images of forearms that are pointing in any direction in an image, thus the complexity. A forearm can have multiple orientations, the primary distinct features probably being its contour edges. Lets focus on forearms for this discussion. I used a 32x32 window with a variety of different input parameters but was never able to to retrieve accurate detection in images. One solution I have tried so far to no avail is HOG detection for forearm identification.

The only problem being that I can't seem to find a reasonable feature detector or classifier to detect this in a rotation and scale invariant way (as is needed by objects such as forearms). I would like to find a way to identify individual body part limbs in an image (ie such as Forearm or lower leg).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed